Private, Public, Hybrid: Picking the Right Cloud

Determining the best cloud hosting approach for your organization can be complicated. Private, public, hybrid—which one is right for you? At Woolpert, we’ve had to make that very same decision. Here’s an inside look at how we selected a cloud along with some practical advice for picking yours.

The Story of SmartView Connect

At Woolpert, we employed a hybrid cloud solution for one project/product in particular: SmartView Connect. What is SmartView Connect, and how does it relate to the cloud?

A large part of Woolpert’s business is collecting, processing and delivering high-quality raster imagery to clients. A critical component of this process is a quality control (QC) step in which clients view their images and either accept them or flag issues requiring additional attention. Clients use SmartView Connect to securely access this pre-delivery imagery and perform online QC.

Private Cloud: Line-of-business Application

From its inception in 2008 until early 2020, SmartView Connect ran entirely on Woolpert’s private cloud using a vmWARE vSphere with an associated storage cluster. This made sense because the application can run close (in network terms) to the massive amounts of potentially deliverable imagery that must be shared with clients.

This very common approach was taken by many businesses over the past decade. Basically, a company surfaces one or more controlled data centers to the internal development and systems team using something like vSphere. This is so common, in fact, that solutions exist specifically to migrate vSphere-hosted applications wholesale to a public cloud.

Your organization may use a very similar setup for geospatial applications.

Public Cloud: Extracting STREAM:RASTER from SmartView Connect

Over the course of 2019, Woolpert’s geospatial team heard from clients that not only was SmartView Connect a great tool for data QC, but they also liked the idea of Woolpert using it to provide final data as a streaming service in addition to traditional delivery methods (hard drives, FTP, etc.). Since we already had their data, the conversation went, couldn’t we just flip a switch and stream that data for a monthly fee?

In mid-2019, the Woolpert Cloud Solutions team decided to extract the SmartView Connect backend into a separate product and reimagine it as a cloud-native service. Thus, STREAM:RASTER was born!

STREAM:RASTER provides streaming hosting of raster datasets, whether sourced from Woolpert or elsewhere. It’s a straight-up software-as-a-service (SaaS) proposition using a consumption-based pricing model.

We decided to put STREAM:RASTER on an entirely public cloud platform because we needed the horizontal and elastic scale to process and serve massive quantities of data without affecting business-critical workflows inside the Woolpert firewall. This approach helped us avoid pushing our production work onto a new platform without proof of its effectiveness.

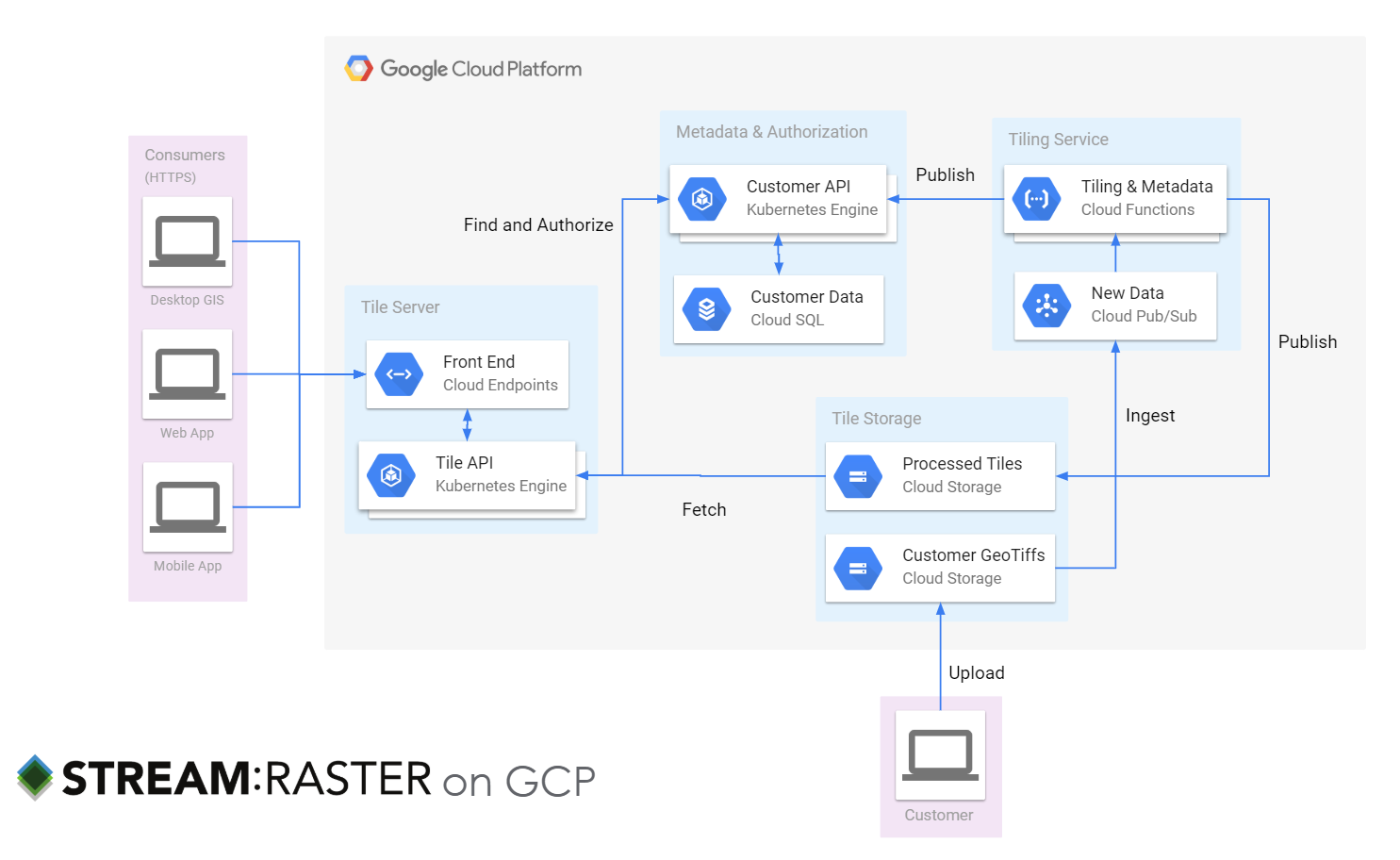

Here’s roughly what STREAM:RASTER looks like deployed on GCP:

Why a public cloud? You can see that we decided to leverage managed services in the cloud for key “plumbing,” e.g., Cloud SQL for metadata, Google Cloud Storage for multi-region scalable object storage, and Cloud Endpoints for baked-in security and reliability. Doing so gave us time to focus on key aspects of our own IP—our know-how on processing and tiling images and serving GIS client software.

Why GCP? Simply put, Google is a strategic Woolpert partner and we really like the technology! That being said, the decision-making process is the same regardless of the vendors you evaluate. For us, it came down to a carefully considered risk: Is it better to lock in a single vendor’s hosted database as a service, or roll out our own and be cloud agnostic? We decided that for us, it made the most sense to let others worry about cloud issues, such as whether PostgreSQL was patched, so that we could focus on the intricacies of our map projections.

I’m happy to report that STREAM:RASTER is going well as an SaaS running exclusively on GCP. But what ever happened with SmartView Connect?

Sustainable Growth Requires Adaptation

By 2019, two things had changed to make the purely private cloud approach for SmartView Connect less ideal for today and even less tenable over the long term.

- More clients: Woolpert continued to sign new contracts for data collection and delivery. While that’s great for us, it does cause more data to flow through our production systems every day—and our clients still want to view it in SmartView Connect.

- Higher-resolution data: Advances in sensor technology have increased the resolution of data. If we collect data at 3” resolution now, but it was 6” in the past, we will need to quadruple the amount of storage for the same data collection area. Clients get better products, but those products require a lot more storage space.

The good news is that we had a new asset to consider as part of planning—STREAM:RASTER. The fact that we had engineered a product to scratch our own itch is hardly a coincidence! Then, we faced another decision that ties into the private/public/hybrid cloud trifecta: Should we move SmartView Connect to the public cloud as well?

Geospatial App, Hybrid Cloud

A hybrid cloud is where the magical thinking of “Let’s just shift everything to the cloud!” comes into abrupt contact with the realities of “lift and shift,” replatforming and rewriting as cloud native.

Much has been written on this topic, and there are even nice project plans to follow, but I encourage you to think carefully first about your business drivers and then about technical constraints.

We have expertise in cloud migrations—we’re the Woolpert Cloud Solutions team, after all. We know how to do this. But we also faced a business constraint: We couldn’t alter SmartView Connect in any way that could cause a slowdown in the production pipeline during the second quarter of 2020. The risk of attempting any kind of migration of the core SmartView Connect application from our private vSphere cloud to GCP was too great.

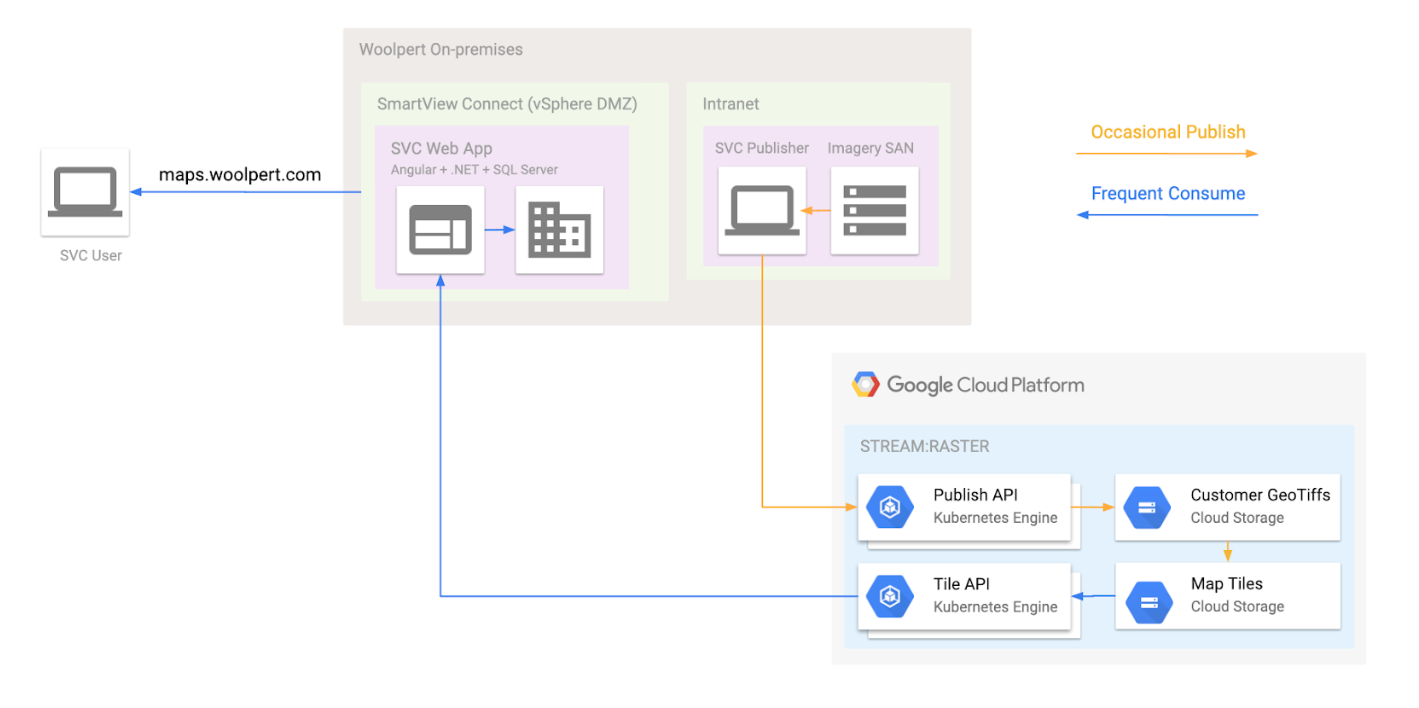

If we couldn’t risk moving the web app to a public cloud, and we wanted to use this great new public cloud SaaS, we were left with just one option: Make SmartView Connect a hybrid cloud application. Here’s where we landed:

The simplified version of STREAM:RASTER is a highly available, low latency, durable raster tile server. We modified SmartView Connect to work directly with STREAM:RASTER as a data source. We applied DevOps to SmartView Connect on vSphere, e.g., building a CI pipeline and rigorous run books for deployment and disaster recovery.

Is Great for Woolpert Great for Me?

I hope that recounting our journey with SmartView Connect clearly shows how Woolpert has dealt with a specific private/public/hybrid decision.

A common question we have from clients is how to scale up their existing GIS infrastructure. As a Google Maps partner, our answer for many commercial clients often looks more like optimization exercises around API consumption and less like debating which type of cloud to implement.

For many public sector customers, like state and local governments, a more common need is shifting an Esri infrastructure to the geospatial cloud. Esri supports some amazing services like ArcGIS Online and provides documentation on how to get there.

We migrated a utility client to GCP this year and our consultants spent time replatforming the base ArcGIS deployment to take advantage of unique GCP features, such as a globally distributed load balancer instead of web adapter. The benefits? Observability, potentially increased reliability and quicker adaptation (like adding memory to an ArcGIS GIS server role). In this case, the client decided to perform data editing and administration in the public cloud on a Windows virtual machine and allow other people in the organization to access the data directly (hybrid cloud).

So you see, this decision doesn’t have to be novel or really even that creative—but it is eminently practical. Predictability is a trademark feature of a good geospatial cloud application!

Summary

I’ll leave you with some parting thoughts as you contemplate your shift to the geospatial cloud.

Public by default; hybrid by design. For on-premises workloads ripe for change, think public cloud by default, then back into sensible deviations from there. For me, that often means a well-crafted hybrid cloud solution, especially when shaped around network and security integration points.

Flatten the differences where possible. Use tools that level the differences across your total cloud portfolio. At a low level, we see containerization as a good starting point. Look back at the STREAM:RASTER diagram, and you’ll see that we are heavy users of Kubernetes. When you expand from containers as an atomic unit of compute, you can use products that smooth over the differences with unified control planes and tooling. Check out full-featured products such as Google Anthos (which runs on private clouds) and multiple public clouds.

Make delivery choices around XaaS (anything as a service). For workloads with pre-built solutions, make deliberate and defensible choices. Ask yourself these important questions:

- What will be difficult to change later? Make these aspects the focus of careful decision-making. Will the vendor still be viable? Is the vendor likely to add any core values that you don’t want/need to build in house?

- Are you outsourcing a core component of your organizational value? For example, is serving quantities of geospatial data a core value of your county GIS, or is the value found in the data creation?

Replatform. If your workloads aren’t immediately amenable to a cloud-native architecture, don’t despair. You can still use the compatible parts of a public cloud to bring business value. For example, you can attach hard drives to virtual machines with cross-regional uptime guarantees or use load balancers to provide additional security benefits.

I’ll close with a quote from the National Oceanic and Atmospheric Administration’s (NOAA) draft cloud strategy. Being a heavily geospatial organization, NOAA’s position is worth considering:

“Traditionally, modernization initiatives have started with the legacy architecture, followed by an evaluation of architectural alternatives for meeting the requirements. The strategy requires a fundamentally different and opposite approach – one that starts with and presumes an end state architecture (specifically, a multi-vendor, multi-tenant commercial cloud environment), followed by a requirements and business case analysis to determine the suitability of cloud for the requirements, and if suitable then determine the best permutation of this architecture (e.g., private, hybrid, public) for the requirements.”

NOAA

NOAA

And as always, assess, plan, deploy and optimize.